Our human Physiology makes us Intelligent

I recently listened to a radio talk-show host interview the technology editor for the New York times, regarding Artificial Intelligence (AI). Of course the technology editor used the metaphor of a neural network to describe AI. The interviewer immediately picked-up on the idea that AI systems were the facsimile of human brains. Even though the editor kept reminding her a neural network was a metaphor it appeared the interviewer quickly became comfortable with the idea that nodes in AI networks were the facsimile of human synapse. She did not appear comfortable when the interviewee began to explain that nodes were not synapses but rather simply a way of describing the decomposition of a much larger mathematical algorithm.

This is a chart created by Fjodor Van Veen of the the Asimov Institute illustrating what he calls a “mostly complete” chart of neural networks. Regardless of how the nodes are configured or the label (e.g. Feed Forward, Markov Chain, Deep Convolutional Network, etc.) used to distinguish one configuration from another, all configurations include some grouping or “layering” of nodes that includes input layer, hidden layer and output layer. An excellent summary of the functions of different layers of nodes is provided by David J. Harris on the Stack Exchange.

- “Each layer can apply any function you want to the previous layer (usually a linear transformation followed by a squashing nonlinearity).

- The hidden layers’ job is to transform the input layer into something that the output layer can use.

- The output layer transforms the hidden layer activations into whatever scale you wanted your output to be on”

Harris continues;

“A feed-forward neural network applies a series of functions to the data. The exact functions will depend on the neural network you’re using: most frequently, these functions each compute a linear transformation of the previous layer, followed by a squashing nonlinearity….The roles of the different layers will depend on the functions being computed.”

Going forward it’s important to keep in mind that AI applications, like a “neural network”, are indeed metaphors for algorithms to which we have assigned the label “artificial intelligence” (AI). Neural networks are not “intelligent”, like a human being, because, while they have memory and logic, they lack emotion and feelings, both of which are critical physiological elements of human intelligence.

According to eminent neuroscientist Antonio Damasio, “we are only just beginning to understand the foundations of human intelligence and consciousness that cannot be captured in an algorithmic formula divorced from the functions of the body and the long evolution of our species and its microbiome.”

According to Science Fiction writer Joseph Reinemann, even Vulcans, like Mr. Spock, “feel” — in fact they feel much, much more passionately than most. But they don’t express it.

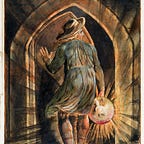

Vulcans, like Spock keep their feelings carefully controlled by deconstructing them and thus robbing them of their potency. In doing so, they’re able to prevent them from affecting any of their outward actions.

Controlling their emotions is a constant struggle for all but the most devoted of Vulcans — those who have undergone the Kohlinar ritual. Kohlinar masters are said to not feel anything at all. Spock almost achieved this… but V’Ger, the sentient, massive entity en route to find its “Creator”, destroyed anything it encountered by digitizing it for its memory chamber. Calling out for its creator stirred something within Spock and ruined his chances of achieving Kohlinar.

The objective of a machine-learning model, the prevailing type of AI, is the identification of statistically reliable relationships between certain features of the input data and a “target variable” or outcome. Newton’s third rule of induction is the first unwritten rule of machine learning. “Whatever is true of everything we’ve seen is true of everything in the universe.”

The authors of “The Simple Economics of Machine Intelligence” have been even more precise in their explanation of what machine learning is and is not. According to Ajay Agrawal, Joshua Gans, and Avi Goldfarb, all professors at the University of Toronto’s Rotman School, machine intelligence technology is, in essence, a “prediction technology”, relying on statistics and probability.

However the human mind is far from “prediction technology”. According to Dr. Damasio, “Feelings tell the mind without any word being spoken of the good or bad direction of the life processes at any moment within its respective body.” — A. Damasio

Dr. Robert Burton’s work compliments Dr. Damasio’s when he writes:

“… the (human) brain creates the involuntary sensation of ‘knowing’ and how this sensation is affected by everything from genetic predispositions to perceptual illusions common to all body sensations.” — Robert A. Burton M.D.

All sensory receptors for vision, hearing, touch, taste, smell, and balance receive distinct physical stimuli and transduce the signal into an electrical action potential. This action potential then travels along afferent neurons to specific brain regions where it is processed and interpreted.

Synesthetes — or people who have synesthesia ( when more than one of a person’s senses are “crossed”) may see sounds, taste words or feel a sensation on their skin when they smell certain scents.

They may also see abstract concepts like time projected in the space around them. Scientists used to think synesthesia was quite rare, but they now think up to 4 percent of the population has some form of the condition. This is how a young lady with synesthesia “sees music” which most of us only hear.

Synesthesia exemplifies how human intelligence is much more complex than what we currently characterize as “Artificial Intelligence (AI)”. Human intelligence manifests itself as sensory information which enters the neocortex by way of the thalamus. The transfer of sensory signals from the periphery to the cortex is not simply a one-to-one relay but a dynamic process involving reciprocal communication between the cortex and thalamus.

Neural networks, a principle type of artificial intelligence, are typically organized in layers. Layers are made up of a number of interconnected ‘nodes’ which contain an ‘activation function’. Patterns are presented to the network via the ‘input layer’, which communicates to one or more ‘hidden layers’where the actual processing is done via a system of weighted ‘connections’. The hidden layers then link to an ‘output layer’ where the answer is output as shown below:

An AI neural network, like the one illustrated above, is not physiological. It is mathematical. In the above illustration data in the input layer is labeled as xwith subscripts 1, 2, 3, …, m. “Nodes in the hidden layer are labeled as hwith subscripts 1, 2, 3, …, n. Note for hidden layer it’s n and not m, since the number of hidden layer neurons might differ from the number in input data.

The hidden layer nodes are also labeled with superscript 1. This is so that when you have several hidden layers, you can identify which hidden layer it is: first hidden layer has superscript 1, second hidden layer has superscript 2, and so on. Output is labeled as y with a hat.

If we have m input data (x1, x2, …, xm), we call this m features. Secondly, when we multiply each of the m features with a weight (w1, w2, …, wm) and sum them all together, this is a dot product like this:

This is not how a human brain works. The brain’s logical and reasoning mechanisms, contained in the the Cerebral Cortex, work along with sensory and emotional mechanisms, contained in the Hippocampus, to retrieve feelings and thoughts that combine to drive behavior.

Humans make use of fundamental processes of life regulation that include things like emotion and feeling, but we connect them with intellectual processes in such a way that we create a whole new world around us.

Once again Dr. damasio sums it up best, “At the point of decision, emotions are very important for choosing. In fact even with what we believe are logical decisions, the very point of choice is arguably always based on emotion.”

__________________________________________________________________

Notes:

- https://www.asimovinstitute.org/author/fjodorvanveen/

- https://stats.stackexchange.com/questions/63152/what-does-the-hidden-layer-in-a-neural-network-compute

- http://ngp.usc.edu/usc-neuroscientist-antonio-damasio-argues-that-fee...acity-for-cultural-creation-a-map-of-the-computational-mind-he-says/

- Domingos, Pedro. The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our World (p. 66). Basic Books. Kindle Edition.

- Robert A. Burton, On Being Certain , Believing you are right, even when you’re not, St. Martin’s Griffin, New York, 2008

- https://www.mnn.com/health/fitness-well-being/stories/what-is-synesthesia-and-whats-it-like-to-have-it

- https://towardsdatascience.com/multi-layer-neural-networks-with-sigmoid-function-deep-learning-for-rookies-2-bf464f09eb7f

- https://www.technologyreview.com/s/528151/the-importance-of-feelings/

- http://bigthink.com/experts-corner/decisions-are-emotional-not-logical-the-neuroscience-behind-decision-making